If you want more people to complete your survey, should you offer an incentive? The short answer from decades of research is yes. The longer answer is that the type of incentive, when you offer it, and how you deliver it matter far more than the dollar amount.

This is not guesswork. Survey incentive effects are among the most studied topics in research methodology. Multiple meta-analyses covering hundreds of experiments give us clear, replicable findings on what works and what does not.

The Core Finding: Incentives Increase Response Rates

Church (1993) published one of the earliest comprehensive meta-analyses of incentive effects, reviewing 38 experimental studies. The finding was unambiguous: monetary incentives significantly increased mail survey response rates, with an average improvement of 19.1 percentage points.

Singer, Van Hoewyk, Gebler, Raghunathan, and McGonagle (1999) extended this work and found similar effects. Across their review, incentives of any kind improved response rates compared to no-incentive controls.

The most comprehensive meta-analysis to date is Singer and Ye (2013), published in the Public Opinion Quarterly. They reviewed over 40 years of experimental evidence and confirmed that incentives reliably increase survey response rates across virtually all survey modes, populations, and contexts.

This is not a contested finding. The question is not whether incentives work but which incentive strategies produce the best return.

Prepaid vs Promised: The Most Important Distinction

The single most consistent finding in incentive research is that prepaid incentives outperform promised incentives by a wide margin.

A prepaid incentive is delivered with the survey invitation, before the respondent decides whether to participate. A promised incentive is offered as a reward for completing the survey.

Church (1993) found that prepaid monetary incentives increased response rates by an average of 19.1 percentage points, while promised incentives increased response rates by only 3.8 percentage points. That is a five-to-one difference in effectiveness.

Singer and Ye (2013) confirmed this gap across a much larger body of evidence. Prepaid incentives consistently produced response rate improvements two to three times larger than equivalent promised incentives.

The mechanism is reciprocity. When someone receives something of value before being asked to act, they feel a social obligation to reciprocate. This is a well-documented psychological principle (Cialdini, 2009) that operates below conscious awareness. A dollar bill enclosed in an envelope triggers reciprocity. A promise of a gift card after completion does not.

Cash Outperforms Non-Cash Incentives

Church (1993) found that monetary incentives (cash) produced larger response rate gains than non-monetary incentives (pens, keychains, lottery entries, donation pledges). This finding has been replicated consistently.

Mercer, Caporaso, Cantor, and Townsend (2015), in a study for the Bureau of Labor Statistics, found that cash incentives outperformed equivalent-value non-cash alternatives across multiple survey types.

The exception is when the non-cash incentive has specific relevance to the respondent. A study offering a free health screening as an incentive for a health survey may perform well because the incentive is directly related to the survey topic. But for general-purpose surveys, cash is the most reliable choice.

For mailed paper surveys, this creates a practical advantage. You can enclose a small denomination bill or coin with the survey. The respondent holds something tangible before they even read the first question. This physical, prepaid incentive is the most effective combination the research supports.

How Much Is Enough?

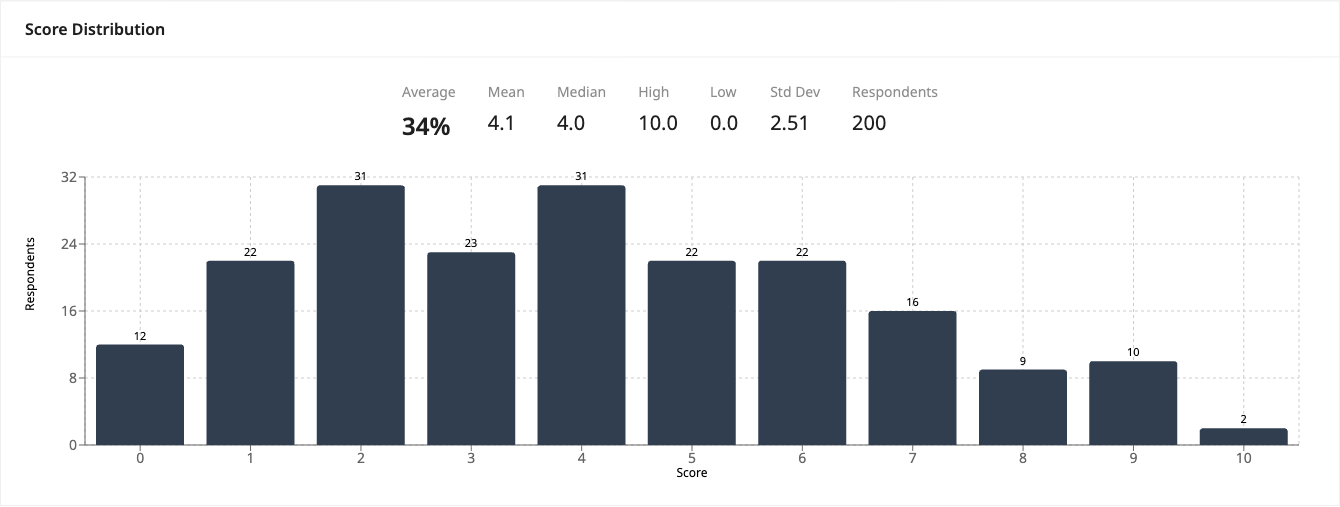

The relationship between incentive size and response rate is not linear. Doubling the incentive does not double the response gain.

Singer and Ye (2013) found that increasing incentive amounts produced diminishing returns. Moving from $0 to $1 produced a larger marginal effect than moving from $1 to $5, which in turn produced a larger effect than moving from $5 to $10.

Mercer et al. (2015) found that for the American Community Survey, a $5 prepaid incentive was nearly as effective as higher amounts. The act of receiving something mattered more than the dollar value.

For most research and institutional surveys, $1 to $5 prepaid is the practical sweet spot. The cost per additional completed survey is lowest in this range. Higher incentives produce marginally better response rates but at a disproportionately higher total cost.

Paper Surveys Have a Built-In Incentive Advantage

The incentive research has a clear implication for survey mode choice. Mailed paper surveys are uniquely well-suited to the most effective incentive strategy: prepaid cash enclosed with the invitation.

You cannot enclose a dollar bill in an email. Digital survey invitations can promise lottery entries, gift card codes, or charitable donations, but these are all promised incentives, not prepaid ones. The research consistently shows promised incentives are far less effective.

Dillman, Smyth, and Christian (2014) specifically recommend enclosing a small cash incentive with mailed questionnaires as part of their Tailored Design Method. Their experimental data shows that this combination, a well-designed paper questionnaire with a prepaid cash incentive, produces response rates that online-only approaches struggle to match.

This does not mean every paper survey needs an incentive. Many surveys achieve adequate response rates without them, particularly those administered in group settings like classrooms or events. But when you need to maximize participation from a distributed population contacted by mail, prepaid cash is the most evidence-based strategy available, and it only works with paper.

Conditional vs Unconditional Incentives

A related distinction is between conditional incentives (given only to respondents who meet certain criteria or complete specific sections) and unconditional incentives (given to everyone who receives the survey).

Research from Göritz (2006) on web surveys found that unconditional promised incentives slightly outperformed conditional ones. The explanation follows the same reciprocity logic: a gift freely given creates more obligation than a transactional reward.

For paper surveys, all prepaid incentives are inherently unconditional, since the money arrives with the questionnaire regardless of whether the respondent completes it. This is the optimal configuration according to the research.

Incentive Effects Across Populations

Incentive effects are not uniform across demographics. Several studies have found that incentives have the largest impact on groups that are otherwise least likely to respond:

- Low-income respondents show larger response increases from monetary incentives (Singer et al., 1999)

- Young adults who typically have the lowest survey response rates show above-average incentive effects (Mercer et al., 2015)

- Minority populations underrepresented in survey samples show greater responsiveness to incentives (Groves et al., 2006)

This means incentives do not just increase overall response rates. They can also reduce nonresponse bias by bringing underrepresented groups into the sample. For researchers concerned about sample representativeness, this is a significant methodological benefit.

What Does Not Work

The research also identifies incentive strategies with weak or no effects:

- Lottery or prize draw entries produce minimal response rate improvement. The expected value is too low and the reward is too uncertain (Singer & Ye, 2013).

- Charitable donations on behalf of the respondent have inconsistent effects and generally underperform direct cash incentives (Warriner, Goyder, Gjertsen, Hohner, & McSpurren, 1996).

- Non-monetary tokens (pens, magnets, stickers) have small positive effects but are much less effective than equivalent-value cash (Church, 1993).

- Very large promised incentives ($50+) can sometimes backfire by creating suspicion about the survey's legitimacy (Singer & Couper, 2008).

Practical Recommendations

Based on the research:

- Use prepaid incentives rather than promised rewards whenever your budget and delivery method allow.

- Use cash rather than non-monetary alternatives for the most reliable effect.

- Keep amounts modest ($1 to $5 for most surveys). Diminishing returns set in quickly.

- Combine incentives with good survey design. An incentive improves response rates, but a poorly designed survey with an incentive still underperforms a well-designed survey with the same incentive.

- Consider paper delivery for surveys where incentive-driven response maximization matters. The prepaid cash strategy is only practical with physical mail.

From Paper to Data

PaperSurvey.io supports the full mailed survey workflow. Design your questionnaire in the online builder, print and mail it with your enclosed incentive, and process returned forms automatically when they come back. Upload scanned responses via browser, email, or Dropbox, and export clean data to Excel, CSV, or SPSS.

The incentive gets people to respond. The technology gets their responses into your dataset without manual data entry.

References

- Church, A. H. (1993). Estimating the effect of incentives on mail survey response rates: A meta-analysis. Public Opinion Quarterly, 57(1), 62-79.

- Cialdini, R. B. (2009). Influence: Science and Practice (5th ed.). Pearson.

- Dillman, D. A., Smyth, J. D., & Christian, L. M. (2014). Internet, Phone, Mail, and Mixed-Mode Surveys: The Tailored Design Method (4th ed.). Wiley.

- Göritz, A. S. (2006). Incentives in web studies: Methodological issues and a review. International Journal of Internet Science, 1(1), 58-70.

- Groves, R. M., Singer, E., & Corning, A. (2006). Leverage-saliency theory of survey participation. Public Opinion Quarterly, 64(3), 299-308.

- Mercer, A., Caporaso, A., Cantor, D., & Townsend, R. (2015). How much gets you how much? Monetary incentives and response rates in household surveys. Public Opinion Quarterly, 79(1), 105-129.

- Singer, E., & Couper, M. P. (2008). Do incentives exert undue influence on survey participation? Journal of Empirical Research on Human Research Ethics, 3(3), 49-56.

- Singer, E., Van Hoewyk, J., Gebler, N., Raghunathan, T., & McGonagle, K. (1999). The effect of incentives on response rates in interviewer-mediated surveys. Journal of Official Statistics, 15(2), 217-230.

- Singer, E., & Ye, C. (2013). The use and effects of incentives in surveys. Annals of the American Academy of Political and Social Science, 645(1), 112-141.

- Warriner, K., Goyder, J., Gjertsen, H., Hohner, P., & McSpurren, K. (1996). Charities, no; lotteries, no; cash, yes. Public Opinion Quarterly, 60(4), 542-562.

Start your free trial and design your first survey in minutes.